Implementation Steps

The LLM deployment was executed through a disciplined, phased approach that prioritized

accuracy, security, and production readiness at each stage:

-

Use Case Definition & Data Audit: Defined the three primary LLM tasks

(contract summarization, clause extraction, risk flagging), audited available training

data, and established ground-truth evaluation sets with the legal domain experts.

-

Base Model Benchmarking: Ran structured evaluations of 5 open-weight LLMs

on the client's legal tasks using ROUGE, BERTScore, and human evaluation metrics to select

the best-fit base model.

-

Dataset Preparation & Fine-Tuning: Cleaned and formatted 50,000+ legal

document samples into instruction-tuning format; ran QLoRA fine-tuning on 4× A100 GPUs

with iterative evaluation checkpoints.

-

Inference Server Setup: Configured vLLM inference server with OpenAI-compatible

API endpoints, enabling seamless integration with existing product backends with minimal

code changes.

-

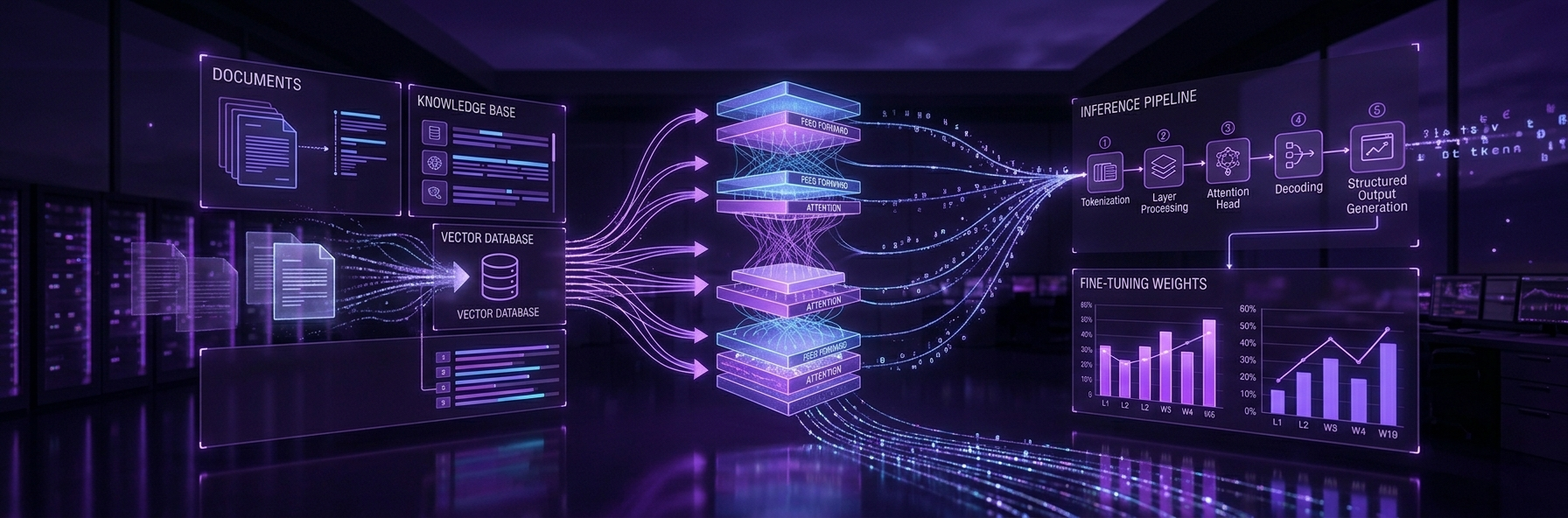

RAG Pipeline Construction: Built document ingestion pipelines, embedded

the contract corpus into a Qdrant vector store, and integrated semantic retrieval into the

LLM prompt chain for grounded document Q&A.

-

Guardrails & Security Hardening: Implemented PII detection, prompt injection

defenses, and output content filtering; conducted red-team adversarial testing on the deployed

model before go-live.

-

LLMOps Pipeline & CI/CD: Built model registry workflows, automated

evaluation gates in the CI/CD pipeline, and configured canary deployment with automatic

rollback on quality regression.

-

Pilot & Production Rollout: Launched with 10 internal legal analysts for

two weeks of shadow testing, incorporated feedback, then rolled out to the full product

user base with live monitoring from day one.

Results

The private LLM deployment delivered transformative gains in accuracy, cost, latency, and

compliance — enabling features that were previously impossible with public API approaches:

-

Domain Accuracy Improvement: Fine-tuned model achieved 38% higher

accuracy on legal clause extraction compared to the best-performing general-purpose

public LLM API tested during evaluation.

-

Inference Latency: Average response time dropped to under 800ms

for document summarization tasks, enabling real-time in-product usage for the first time.

-

Cost Reduction: Private deployment reduced per-query inference cost by

78% compared to public API pricing at equivalent query volumes.

-

Hallucination Rate Reduction: Guardrails and RAG grounding reduced

hallucinated outputs by 91% compared to vanilla LLM API responses on legal queries.

-

Full Data Privacy: Zero client documents transmitted to any external API —

achieving 100% data residency compliance and clearing the compliance team's blocker

to production launch.

-

Legal Analyst Productivity: Contract review time per document reduced by

60%, enabling analysts to process three times more contracts in the same workday.

-

Stable Model Versioning: Zero unplanned model behavior changes in production

post-launch, compared to three breaking changes experienced with the public API in the

prior six months.

Conclusion

The production LLM deployment demonstrated that private, domain-optimized large language

models consistently outperform general-purpose public APIs on specialized enterprise tasks —

while delivering superior privacy, cost efficiency, and operational control. By combining

fine-tuning, RAG, guardrails, and a robust LLMOps pipeline, the legal-tech client transformed

its document workflows, dramatically improved analyst productivity, and established a compliant,

scalable AI foundation ready to power the next generation of its product. The engagement proved

that with the right architecture, enterprises no longer need to choose between AI capability

and data security.